Well, maybe not all in the writing, but many common mistakes made in developing surveys come from inexact wording of questions. This is according to Fred Van Bennekom, principal of Great Brook Consulting, who gave an excellent presentation—The Dirty Dozen of Common Survey Mistakes—at this month’s meeting of the Capital Area Chapter of HDI, a membership association for people who work in IT support.

Here are some of the common writing-related survey mistakes Fred described:

- Double-barreled questions. Here’s an example from a local government’s land use survey: “Our town should ensure the protection of critical natural resources and wildlife habitats in land use decisions and policies.” If a respondent agrees we should protecting resources but doesn’t think we should protect habitats, he’s stuck. With this double-barreled question, he has to answer yes or no to both.

- Overuse of open-ended questions. These questions invite, and require, a lot of writing. Too many of them will tire respondents out. Besides, the information you gather in open-ended questions is harder to compile.

- Poor instructions on how to show a response. Should respondents check a box, circle a term, or rank options in order of importance? If you aren’t very clear about what to do, respondents will do things the wrong way and you’ll have to toss out their feedback.

- Questions written in loaded language. You may already know what survey results you’re hoping for, but, among other sins, loaded questions waste respondents’ time. Here’s a biased example Fred shared, drawn from the land use survey: “Agree or disagree with the following statement: ‘The population growth in our town is stressing town services and educational facilities.'” Hmmm…I wonder whether they want me to agree or disagree.

- Questions not worded to the scale. If the survey offers respondents an Excellent-Satisfactory-Poor scale, it should not ask yes-no questions such as “Was the audit conducted in a professional manner?”

- Incomplete response options. Some surveys fail to offer the range of options a respondent will need. Fred provided this example of a customer satisfaction survey he completed after a stay in a luxury hotel. One of the items he was asked to rate: “Ability of the staff to anticipate your needs.” The survey should have included a Not Applicable option, as guests may have had all their needs met without any evidence that staff had anticipated them. And honestly, how would guests know that staff had anticipated their needs?

Does the Macys.com/tellus survey avoid common mistakes?

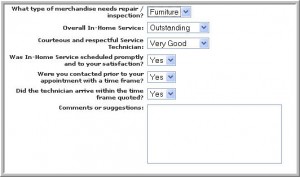

With Fred’s advice still resonating, I rifled through the huge collection of receipts in my wallet to find one that listed a survey URL so I could weigh in on my experience as a customer. My Macy’s receipt led me to this survey. At first glance, I thought the Macy’s survey generally followed the guidelines Fred provided. The questions are clear enough, the response options match the questions, and I’m given the chance to provide comments. On closer inspection, Macy’s could improve the writing a bit:

- The capitalization is inconsistent (see technician)

- The question about scheduling in-home service is double-barreled, asking about both promptness and satisfaction.

- The choice of the word quoted is interesting. Was the survey too timid to use the word promised?

Now that we’ve opened the topic of survey writing, we’re inviting you to share. Send us examples of well-written, or badly written, surveys. We’re especially interested in online surveys that solicit customer responses.

— Leslie O’Flahavan

Tags: Customer satisfaction survey, Customer service

I would like to compliment an exceptional employee at Macy’s (Farzaneh Saee – Calvin Klein Linens Selling Specialit) help me purchase a new comforter, pillows, sheets, shams, etc. There were some issues related to late shipment and Farzaneh helped take care of the matter with improved credit for sales, and she was very helpful in picking the right items to make it all work. I’m selective on these kinds of things (I spent $2,000 with new mattress, springs and covers) so her knowledge, attentiveness and help made all the difference. I would highly recommend you at least comidate her for her efforts – I’m in managment at Boeing and seek people like her for managment positions – it would be wise based on “others” in the area that were not that interested in the matter – she be considered for higher levels at Macy’s – it made the difference between me pulling the plug and buying these items elshwere or sticking with Macy’s due to her service, period. Please let her know in some capacity she did well. Thank you.